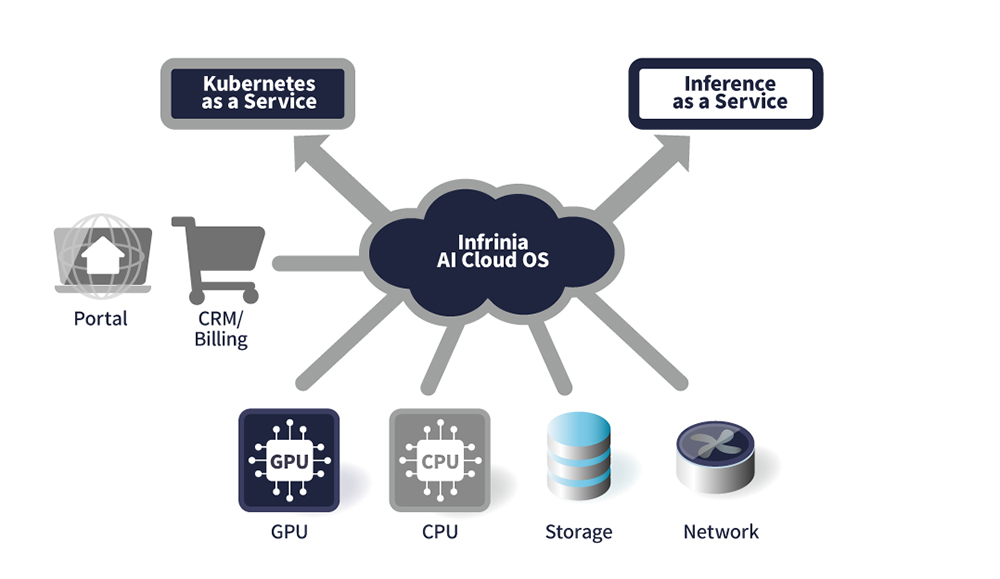

SoftBank has announced that its Infrinia Team has developed Infrinia AI Cloud OS, a software stack designed for AI data centers. SoftBank says the stack is intended to help AI data center operators deliver GPU cloud services at scale by managing GPUs, Kubernetes, and AI workloads, and by supporting multi-tenant Kubernetes as a Service and Inference as a Service.

SoftBank says deploying Infrinia AI Cloud OS lets operators build Kubernetes as a Service in a multi-tenant environment and offer Inference as a Service that provides Large Language Model inference via APIs as part of their own GPU cloud services. SoftBank also says the software is expected to reduce total cost of ownership and operational burden compared with bespoke solutions or in-house development, and to support the full AI lifecycle from model training to inference.

For Kubernetes as a Service, SoftBank says the stack automates “the entire stack (from BIOS and RAID settings to the OS, GPU Drivers, networking, Kubernetes Controllers and Storage)” on GPU platforms including NVIDIA GB200 NVL72. SoftBank also says it supports software-defined, on-the-fly physical connectivity (NVIDIA NVLink) and memory (Inter-Node Memory Exchange) reconfiguration as customers create, update, and delete clusters, plus automatic node allocation based on GPU proximity and NVIDIA NVLink domain to reduce latency and maximize GPU-to-GPU bandwidth for distributed jobs.

For Inference as a Service, SoftBank says users can deploy inference services by selecting Large Language Models without working with Kubernetes or underlying infrastructure. The company says the service provides OpenAI-compatible APIs for “drop-in integration with existing AI applications,” and “seamless scaling across multiple nodes in core and edge platforms such as NVIDIA GB200 NVL72 and other platforms.”

SoftBank lists secure multi-tenancy and operability features including tenant isolation “through encrypted cluster communications and separation,” automation for operational maintenance including system monitoring and failover, and an API environment for connecting to an AI data center portal, customer management systems, and billing systems. SoftBank says it plans to deploy Infrinia AI Cloud OS initially within its own GPU cloud services, and that the Infrinia Team aims to expand deployment to overseas data centers and cloud environments.

Source: SoftBank