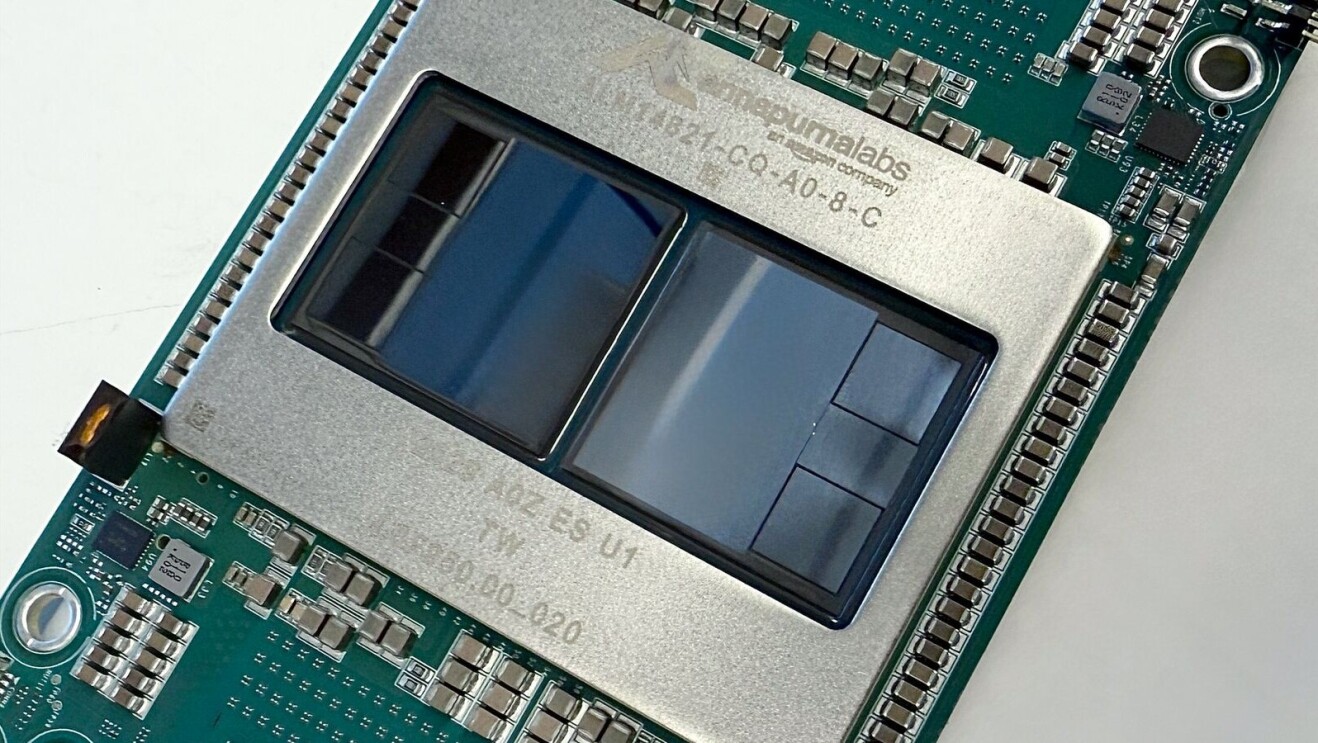

Amazon Web Services (AWS) has announced the general availability of Amazon EC2 Trn3 UltraServers, designed to deliver high performance for demanding artificial intelligence (AI) training and inference workloads in data centers. The new UltraServers are powered by the Trainium3 chip built on three-nanometer technology, aiming to accelerate AI development and lower operational costs for hyperscale and enterprise users.

Each Trn3 UltraServer can incorporate up to 144 Trainium3 chips, which AWS reports deliver as much as 4.4 times greater compute performance, four times higher energy efficiency, and nearly four times the memory bandwidth compared to the previous generation Trainium2 UltraServers. The servers support up to 362 FP8 petaFLOPS and offer up to four times lower latency for large-scale AI model training and inference tasks. Real-world testing using OpenAI’s GPT-OSS model has demonstrated three times higher throughput per chip and four times faster response times than Trainium2, according to AWS.

Technical advancements in Trainium3 include optimized interconnects for high-speed chip-to-chip communication, enhanced memory systems to remove bottlenecks with large AI models, and a reported 40% gain in energy efficiency over prior versions. These upgrades target data center environments focused on scalability and cost-effectiveness for both AI training and real-time inference workloads.

AWS also introduced new networking features, including NeuronSwitch-v1, which doubles bandwidth within each UltraServer, and enhanced Neuron Fabric networking, which reduces chip-to-chip communication latency to under 10 microseconds. The EC2 UltraClusters 3.0 architecture allows data centers to scale clusters up to 1 million Trainium chips, directly supporting next-generation large language models and concurrent user inference at previously unattainable scale.

Several companies, including Anthropic, Karakuri, Metagenomi, NetoAI, Ricoh, and Splash Music, are using Trainium UltraServers to reduce AI training costs by up to 50%. Amazon reports that Decart is achieving four times faster generative video inference at half the cost of GPUs. Amazon Bedrock is already serving production workloads using Trainium3, demonstrating operational readiness for large-scale AI deployment.

AWS also says that it is developing Trainium4, which is expected to provide at least six times the processing performance and four times more memory bandwidth than Trainium3, along with full support for NVIDIA NVLink Fusion for high-speed chip interconnects. This will enable seamless integration of Trainium, Graviton, and GPU resources within common rack infrastructure in data centers.

Source: Amazon Web Services