d-Matrix has announced JetStream, a custom input/output (I/O) card designed for large-scale data center AI inference. The JetStream I/O accelerator targets ultra-low latency for AI workloads and expands d-Matrix’s portfolio, which already includes the Corsair compute accelerators and Aviator software. According to d-Matrix, JetStream delivers improved efficiency and cost performance for demanding inference scenarios in data center environments.

d-Matrix claims that when JetStream is combined with Corsair accelerators and Aviator software, the integrated solution can scale to models exceeding 100 billion parameters and deliver up to 10 times the speed, three times better cost-performance, and triple the energy efficiency compared to GPU-based solutions. These figures are based on preliminary estimates using the Llama 70B model on a configuration of eight Corsair servers. d-Matrix notes that performance, cost, and power estimates are preliminary and may change, and that individual results may vary.

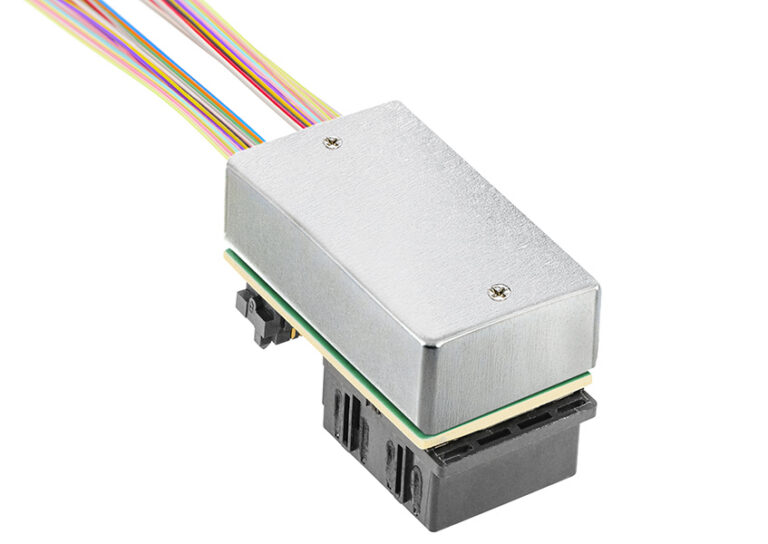

JetStream is architected as a transparent network interface card (NIC) and streaming solution optimized for d-Matrix Corsair accelerators. The card is built in a standard PCI Express (PCIe) Gen5, full-height format, capable of up to 400 Gbps bandwidth. JetStream is designed to integrate with off-the-shelf Ethernet switches, which d-Matrix says allows for deployment into existing data centers without requiring major infrastructure changes.

Sid Sheth, co-founder and CEO of d-Matrix, said, “JetStream networking comes at a time when AI is going multimodal, and users are demanding hyper-fast levels of interactivity,” said Sid Sheth, d-Matrix co-founder and CEO. “Through JetStream, together with our already-announced Corsair compute accelerator platform, d-Matrix is providing a path forward that makes AI both scalable and blazing fast.”

JetStream samples are now available, and d-Matrix expects full production by the end of 2024.

Source: d-Matrix