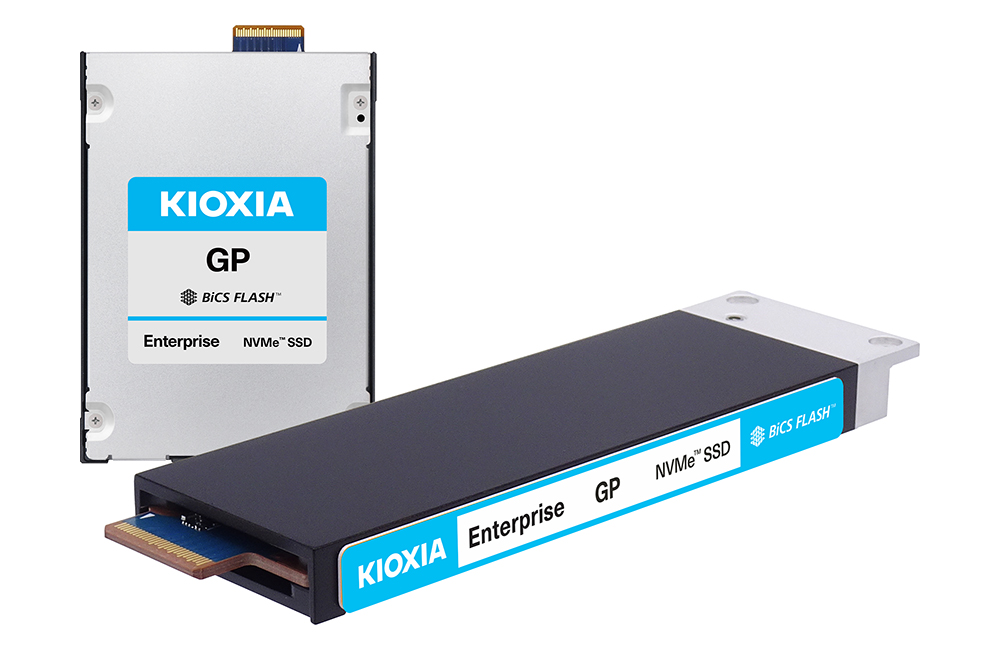

Kioxia has developed a new SSD it calls the KIOXIA GP Series “Super High IOPS SSD,” aimed at NVIDIA Storage-Next architecture and GPU-initiated AI workloads where the GPU directly accesses flash as an expansion tier to HBM. The company says evaluation samples of the GP Series will be available to select customers by the end of 2026.

The GP Series is designed around the idea that HBM capacity can be a practical limiter for data-intensive AI workflows, and that extending the GPU’s accessible memory space can improve utilization by keeping more data close to compute. In NVIDIA’s Storage-Next initiative, SSDs are expected to be optimized specifically for GPU-initiated access patterns, rather than traditional host-initiated storage IO.

For the media, Kioxia says the GP Series uses its XL-FLASH storage class memory. Compared with Kioxia’s “conventional TLC SSDs,” Kioxia says the GP Series delivers higher IOPS, finer-grained access at 512 bytes, and lower power consumption per IO. Those are the knobs that matter if an SSD is being asked to behave more like an extension of the memory hierarchy than a bulk-storage device, but the proof will be in latency distributions and sustained behavior under mixed AI access patterns, not peak metrics.

“Kioxia fully supports the NVIDIA Storage-Next initiative and will deliver purpose-built SSDs to effectively address the need for GPU-accessible memory,” said Makoto Hamada, senior director of the SSD Division at Kioxia. “This collaboration is instrumental in shaping the future of AI storage architecture.”

Kioxia also pointed to a separate product for large-scale inference environments: the KIOXIA CM9 Series PCIe 5.0 E3.S SSD, which it says offers 25.6 TB TLC capacity and 3 DWPD endurance. Kioxia said samples of the CM9 Series will begin shipping in Q3 2026.

Source: Kioxia