Phononic has announced the Thermal Kit, an intelligent cooling solution designed for artificial intelligence (AI) data centers. The company claims the kit addresses performance losses caused by thermal throttling and reduces infrastructure overprovisioning costs by delivering targeted, node-level cooling for high-power compute nodes.

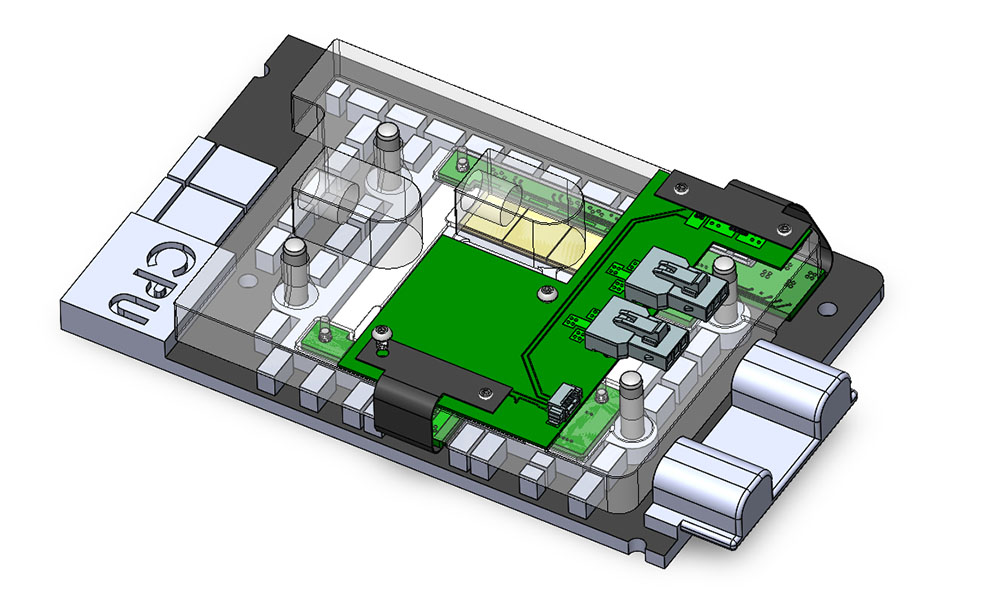

According to Phononic, its Thermal Kit combines high-performance thermoelectric coolers with an integrated mechanical and thermal architecture, and is controlled via accessible firmware and software APIs. This setup enables precise, chip-level thermal management for processors and high-bandwidth memory, and operates as a supplement to existing liquid-cooled data center systems. The system is designed to identify and respond to thermal hotspots in milliseconds, aiming to maintain optimal compute temperatures and limit throttling during variable AI workloads.

The company reports that data center operators currently overprovision cooling capacity by up to 78 percent to accommodate unpredictable AI workload spikes, contributing to both wasted energy and capital expenditure. Phononic states its cooling technology can reduce performance throttling—potentially minimizing performance drops of up to 30 percent—without redesigning silicon or requiring substantial infrastructure changes. Applications detailed in the release include transformers, generative AI models, large batch training, and large language model inference.

Phononic claims additional operational benefits: improved utilization and extended life of high-value assets, along with lower facility energy consumption by running secondary cooling loops at warmer settings to reduce chiller demand. The company also notes that it holds a position in optical transceiver cooling and states its solutions are deployed with tier 1 hyperscalers and major equipment manufacturers globally.

Matt Langman, Senior Vice President and General Manager of Infrastructure Solutions at Phononic, said, “The Thermal Kit is designed to meet one of the biggest challenges of today’s AI data centers: cooling,” adding, “For operators facing unprecedented power demands for today’s AI workloads, it is mission critical to maintain performance through reductions in thermal throttling and optimized energy use of existing liquid-cooled infrastructure. With this breakthrough, customers can unlock higher compute capability and deliver meaningful data center wide ROI.”

Source: Phononic