As compute density surges, interconnect is no longer just plumbing—it’s becoming one of the biggest power and architecture constraints in modern AI systems.

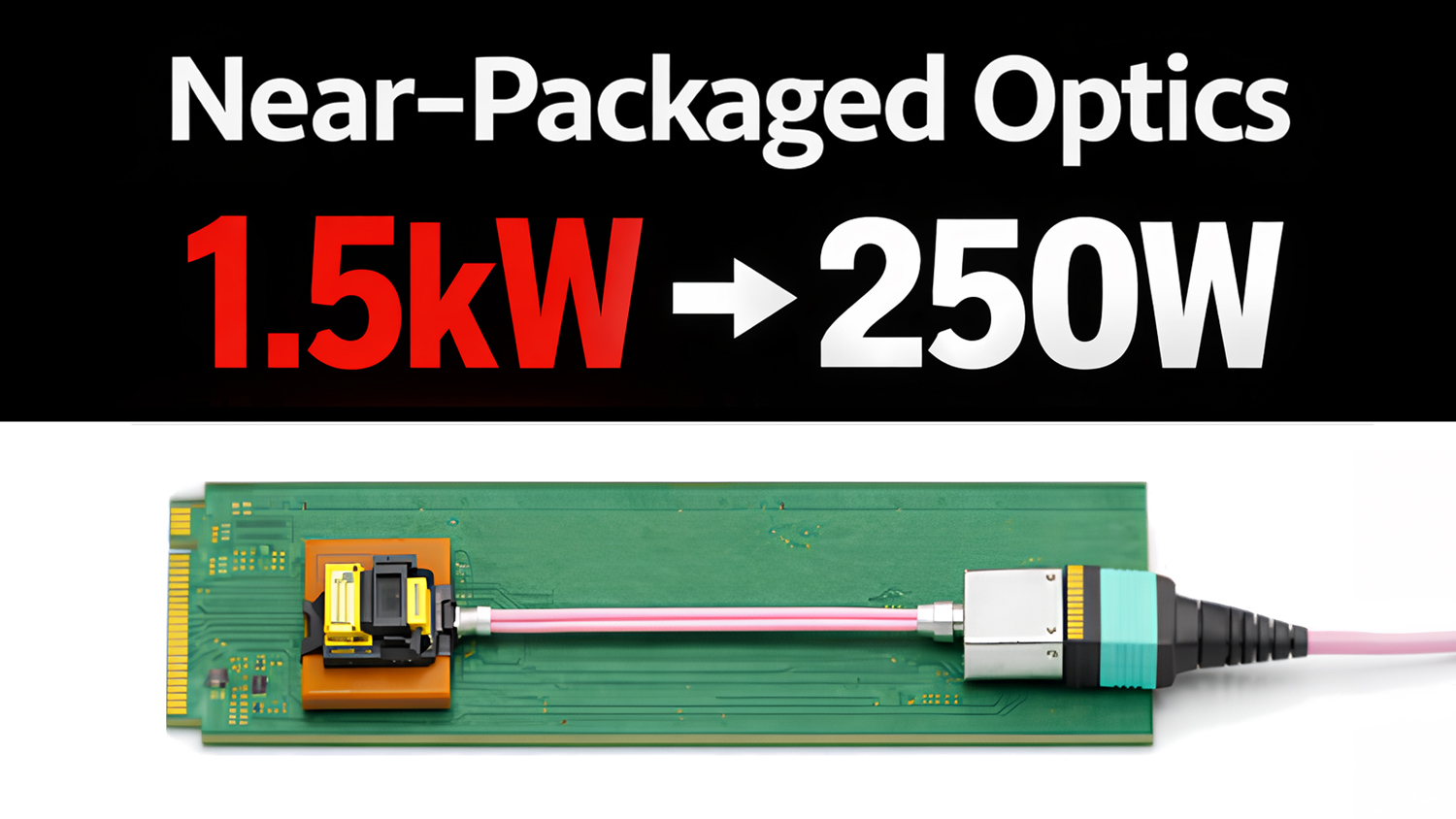

“Think of a 51.2-terabit switch guzzling about one and a half kilowatts of power,” said Rohan Y, Founder and CEO of LightSpeed Photonics. “The pluggables can come down to as low as 250 watts using our technology. That’s huge at scale.”

That shift—from interconnect as an afterthought to a primary power consumer—is forcing data center engineers to rethink how data actually moves through AI systems. As bandwidth requirements explode, the traditional approach of routing signals across a board and out to front-panel optics is starting to break down.

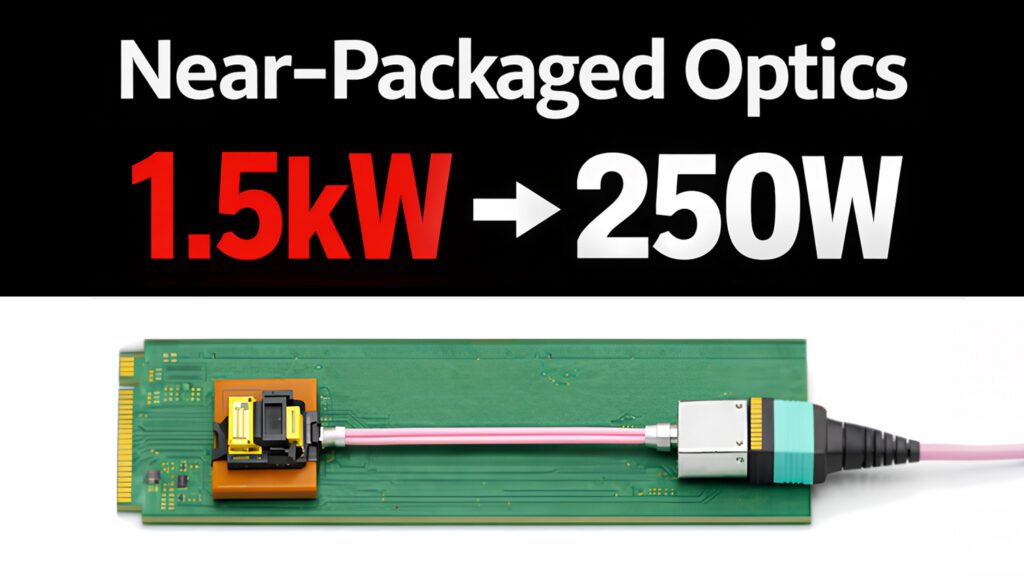

In a recent interview (embedded below) with The Data Center Engineer, LightSpeed outlined why it believes near-packaged optics (NPO) could become a practical bridge between today’s pluggable optics and future architectures—and why that shift matters for power, density, and system design.

Watch the full interview

The “shoreline problem”: bandwidth wants out, packaging pushes back

At the heart of the issue is a physical constraint.

“People call it the shoreline problem,” Rohan explained. “You have a chip that is very small and you have to get as much data bandwidth out of it… like a really good architectural building, but you have really bad highways.”

The analogy captures the imbalance shaping modern systems. Compute has scaled aggressively, but the pathways that carry data off-chip—PCB traces, connectors, and pluggable modules—have not kept pace. Moving signals from the package to the edge of the board is no longer trivial, and at current data rates, even short electrical paths introduce meaningful loss.

Traditional pluggable optics were designed for flexibility, not for this level of density. They allow engineers to choose different reaches and configurations, but they also require signals to travel farther before reaching the optical interface. As those distances become harder to manage, the interconnect itself begins to dictate system architecture.

Why pluggables burn watts: DSP as the hidden tax

One of the less obvious consequences of long electrical paths is how much power is spent correcting them.

“The DSP [Digital Signal Processor] rebuilds a signal that is transmitted by the compute engine farther on the board,” Rohan said. “That consumes 7, 8, 10 watts of power.”

In other words, a significant portion of interconnect power isn’t used to move data—it’s used to recover degraded signals. As electrical paths lengthen, the system relies more heavily on equalization and signal processing, which adds both power and complexity.

Near-packaged optics changes that equation by shortening the electrical path. When the optical conversion happens closer to the ASIC, the signal arrives cleaner, and much of that recovery overhead can be reduced or eliminated. The improvement is not incremental—it directly targets one of the biggest hidden costs in high-speed interconnect.

Near-packaged optics vs. co-packaged optics: similar goal, different risk profile

Near-packaged optics is often discussed alongside co-packaged optics (CPO), but the distinction matters.

“The way we are using near packaged optics is very similar to how one would achieve co-packaged optics,” Rohan said. “Only the difference being that instead of the optical engine being inside the compute engine’s package, we put it near the package.”

Both approaches aim to minimize electrical distance, but they diverge in how tightly optics are integrated with compute. CPO places optical engines inside the package, which maximizes density but introduces new challenges in thermal management, manufacturing, and serviceability.

Near-packaged optics sits just outside that boundary. It captures much of the signal-integrity benefit while preserving separation between components.

“It’s having the best of both worlds, you’re almost as if you are on the same package… but at the same time, you are separate.”

That separation allows optical components to maintain independent thermal control and, critically, to be replaced without discarding expensive compute hardware. In tightly integrated systems, even a minor optical failure can take down an entire module.

Modularity still matters—but it’s changing shape

One of the enduring advantages of pluggable optics is modularity. Engineers can swap modules, change link types, and adapt systems without redesigning the board.

Near-packaged optics redefines that flexibility rather than eliminating it. “You still get the modularity of the fiber cables… but you sold the electrical side of it,” Rohan explained.

In practice, the optical interface remains modular, but the electrical interface becomes more fixed. That shift forces architects to think more carefully about topology and reach.

“It’s not a limitation. It’s a constraint that you need to work around,” said Rohan.

The tradeoff is clear: less flexibility in exchange for higher density and better efficiency. For many AI workloads, that trade is increasingly acceptable.

Standards, reliability, and the path to adoption

Unlike pluggable optics, which evolved through well-defined standards, near-packaged optics is still early in its lifecycle.

“Standards are generally almost an afterthought,” Rohan said. “You first come with a breakthrough technology, then people start talking about standards.” That means early implementations may not follow familiar paths like MSA-driven ecosystems. Instead, they will likely rely on existing reliability frameworks and platform-specific designs.

“It’s not likely to follow the MSA path or the LPO path, but that is a blessing in disguise.”

For engineers, this creates both risk and opportunity. The lack of rigid standards allows for optimization, but it also requires more careful evaluation of integration, reliability, and long-term compatibility.

Beyond AI clusters: interconnect as a system enabler

While near-term adoption is driven by AI clusters, the longer-term implications are broader. “Compute, storage, memory disaggregation is going to be a thing, mark my words,” Rohan said.

As systems become more modular, with resources distributed across different nodes, the interconnect becomes the fabric that holds everything together. Performance is no longer defined solely by compute capability, but by how efficiently data can move between components. In that context, interconnect is not just a supporting element—it is a defining one.

Perhaps the most important takeaway is how the power equation has shifted. “Almost one third goes to interconnect,” Rohan noted.

That is a fundamental change. Interconnect is no longer a secondary consideration; it is competing directly with compute for power budget. As a result, engineers are increasingly focused on minimizing energy per bit and optimizing the physical path data takes through the system.

Near-packaged optics fits squarely into that effort by reducing both distance and overhead.

What changes now

Rohan’s advice to system architects is not to replace one solution with another, but to rethink assumptions.

“Don’t just limit yourself to thinking in terms of pluggables, think of the alternatives,” he explained. At the same time, he emphasizes that existing technologies are not going away. “You need pluggables. They’re going to be in the market for the next 10–15 years.”

The future is not a single architecture, but a mix of approaches—pluggables for long reach, co-packaged optics for extreme density, and near-packaged optics filling the space in between.

As AI systems scale, the constraints are no longer purely computational. Power, density, and physical layout are all becoming limiting factors. The interconnect, once treated as infrastructure, is now central to system performance.

That is why approaches like near-packaged optics are gaining attention. They are not simply new components—they are part of a broader shift toward treating data movement as a first-class design problem. And as that shift continues, the question is no longer whether interconnect needs to change, but how far engineers are willing to rethink the systems they build around it.