Rambus has announced its HBM4E Memory Controller IP, which it says is designed to meet rising memory-bandwidth requirements in next-generation AI accelerators and graphics processing units (GPUs). The company positions the controller as an HBM4E interface option with added reliability features for high-bandwidth AI and high-performance computing (HPC) workloads.

Rambus says the HBM4E Controller supports operation up to 16 Gigabits per second (Gbps) per pin, delivering up to 4.1 Terabytes per second (TB/s) of throughput to each memory device. Rambus adds that, for an AI accelerator with eight attached HBM4E devices, the configuration translates to over 32 TB/s of memory bandwidth.

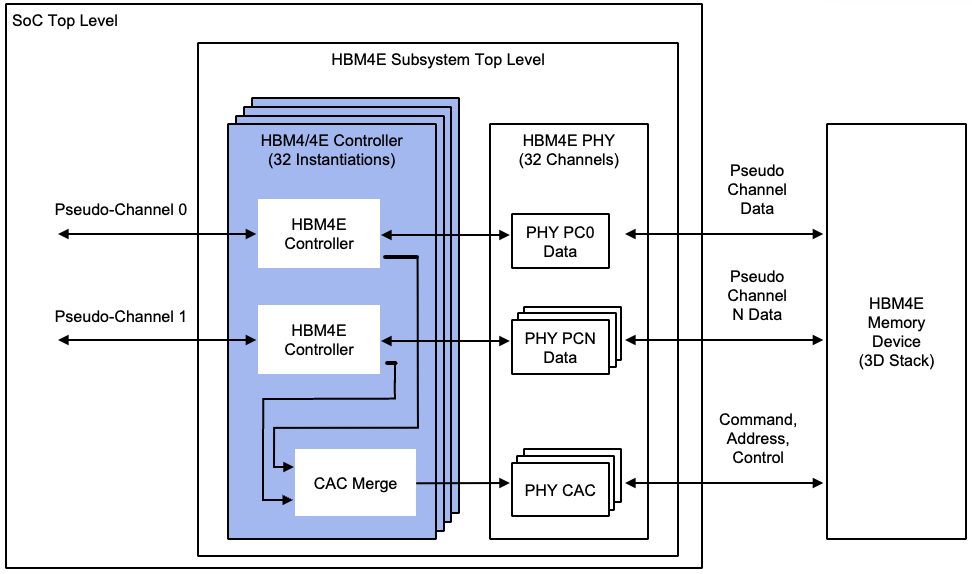

The company says the HBM4E Controller IP can be paired with third-party standard or through-silicon via (TSV) physical layer (PHY) solutions to build a complete HBM4E memory subsystem. Rambus says the subsystem can be implemented in a 2.5D or 3D package as part of an AI system-on-chip (SoC) or a custom base die solution.

The primary applications Rambus cites for the controller include AI accelerators, graphics, and HPC. In addition to listing next-generation AI accelerators and GPUs as target devices, the company frames the product around addressing bandwidth bottlenecks between memory and processing for data-intensive workloads.

Rambus says the HBM4E Controller IP is available for licensing and that early access design customers “can engage” now. Additional product information is available at rambus.com/interface-ip/hbm.

Source: Rambus