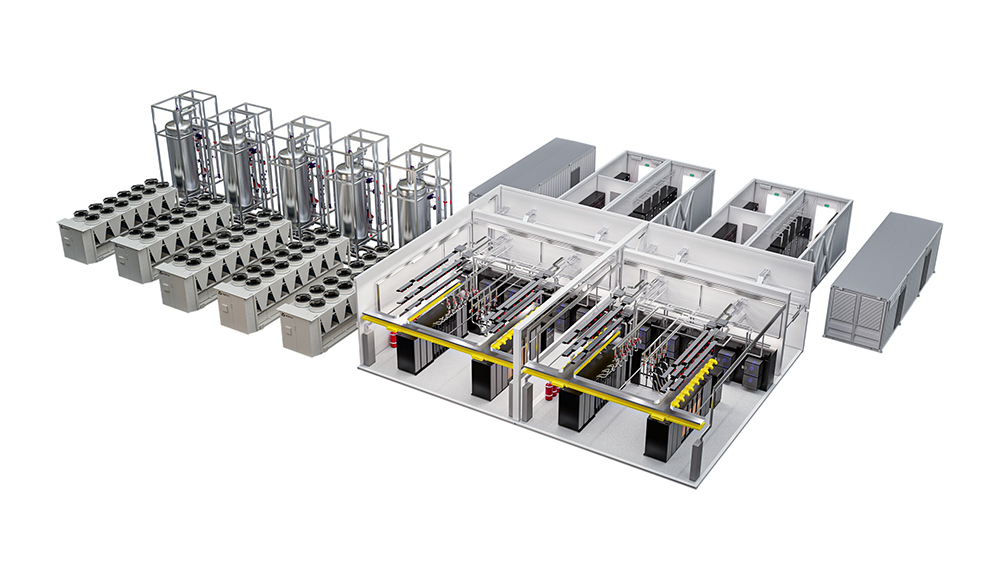

Vertiv has announced new configurations of Vertiv MegaMod HDX, a prefabricated power and liquid-cooling infrastructure solution for high-density computing environments, including artificial intelligence (AI) and high-performance computing (HPC). Vertiv says the new configurations are designed to help operators meet rising power and cooling requirements while optimizing space and deployment speed, and that the models are available globally.

Vertiv MegaMod HDX integrates direct-to-chip liquid cooling with air-cooled architectures for high-density thermal management. Vertiv positions the system for pod-style AI environments and advanced graphics processing unit (GPU) clusters, with support for rack densities from 50 kW to more than 100 kW per rack.

The compact configuration uses a standard module height and supports up to 13 racks with power capacity up to 1.25 MW. The combo configuration uses an extended-height design and supports up to 144 racks with power capacities up to 10 MW.

“Today’s AI workloads demand cooling solutions that go beyond traditional approaches,” said Viktor Petik, Senior Vice President, Infrastructure Solutions at Vertiv. “With the Vertiv MegaMod HDX available in both compact and combo solution configurations, organizations can match their facility requirements while supporting high-density, liquid-cooled environments at scale. Our designs deliver what data centers need most—reliable performance, operational efficiency, and the ability to scale their AI infrastructure with confidence,”

Vertiv says the MegaMod HDX models use a hybrid cooling architecture that combines direct-to-chip liquid cooling with adaptable air systems in a fully integrated, prefabricated pod. The company also says the solutions include a distributed redundant power architecture intended to support continuous operation if one module goes offline, and a buffer-tank thermal backup system intended to help GPU clusters maintain stable operations during maintenance or load transitions.

Vertiv says both configurations draw on its broader portfolio, including the Liebert APM2 uninterruptible power supply (UPS), CoolChip cooling distribution unit (CDU), PowerBar busway system, and Unify infrastructure monitoring. Vertiv also lists related rack and heat-rejection options, including Vertiv racks, Open Compute Project (OCP)-compliant racks, CoolLoop RDHx rear-door heat exchanger, CoolChip in-rack CDU, rack power distribution units, PowerDirect in-rack DC power system, and CoolChip Fluid Network Rack Manifolds.

Source: Vertiv