Ayar Labs and Wiwynn say they’ve formed a strategic partnership to deliver optically connected, rack-scale AI systems built around co-packaged optics (CPO) for hyperscale workloads. The companies are positioning the work as a path to move CPO from component demos into deployable rack-level infrastructure, targeting AI scale-up networks where copper interconnects can become a constraint on performance, system growth, and power efficiency.

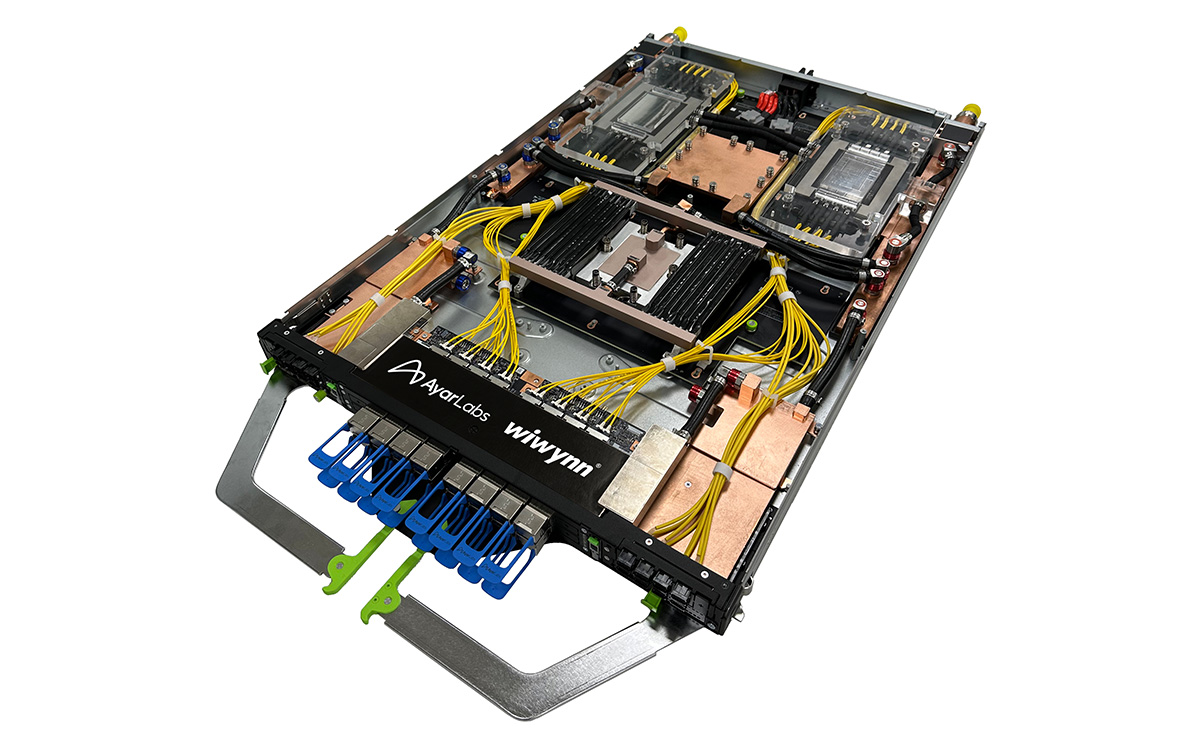

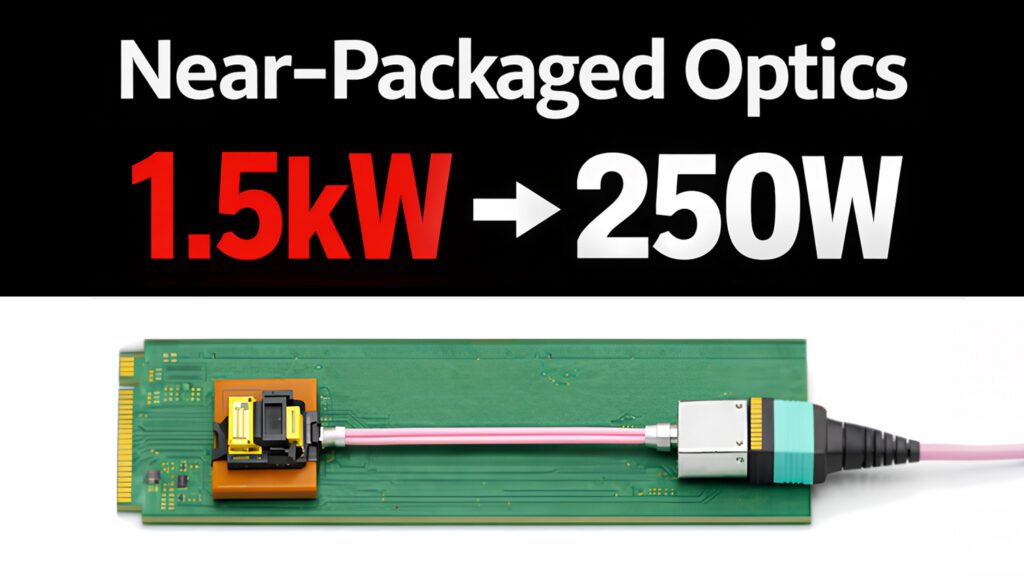

The joint solution integrates Ayar Labs’ AI scale-up CPO technology into Wiwynn’s rack-level architecture, including Ayar Labs’ TeraPHY optical engines powered by the SuperNova remote light source. In the announcement, the companies call out deployment issues they’re aiming to address at the system level: optical fiber management, integration of CPO-enabled AI ASICs, thermal management, power efficiency, and manufacturability.

For data center engineering teams, the practical implication is less about photonics as a standalone technology and more about whether a rack-scale design can be built, cooled, cabled, and serviced at hyperscale. CPO shifts the interconnect medium from electrical to optical, but it also changes how you think about fiber routing, connector access, service procedures, and where the light source sits in the rack. The announcement’s focus on “serviceable system designs” and fiber management is an explicit nod to those operational realities.

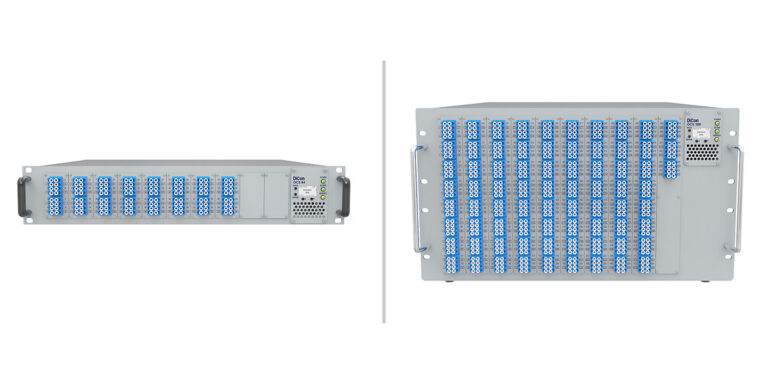

The companies say the rack-scale AI infrastructure is designed to scale to 1,024 AI accelerators and beyond, with each accelerator capable of delivering more than 100 Tbps of optical connectivity. They also state that this enables “thousands of accelerators to operate as a single, unified system across multiple racks.” On the mechanical and thermal side, the design is described as liquid-cooled and optimized for high-power operation, with support for external laser small form factor pluggable (ELSFP) light sources, advanced fiber management, and serviceable rack-level designs.

“AI infrastructure is outgrowing the limits of copper, and hyperscalers need a fundamentally new approach to scale,” said Mark Wade, CEO and co-founder of Ayar Labs. Wiwynn President and CEO William Lin added, “Together, we are enabling advanced optical I/O that delivers greater scalability and energy efficiency for cloud and hyperscale customers, powering next-generation AI data centers.”

Ayar Labs and Wiwynn also said they plan to showcase the joint AI CPO solution at the Optical Fiber Communication Conference (OFC), March 15-19, 2026, in Los Angeles. The preview is described as an HVDC-enabled, rack-level system architecture featuring a 100% liquid-cooled AI system reference design, with support for ELSFP SuperNova remote light sources and AI ASICs with optical engines; access is via private, pre-arranged briefings for select customers, press, and analysts.

Source: Ayar Labs