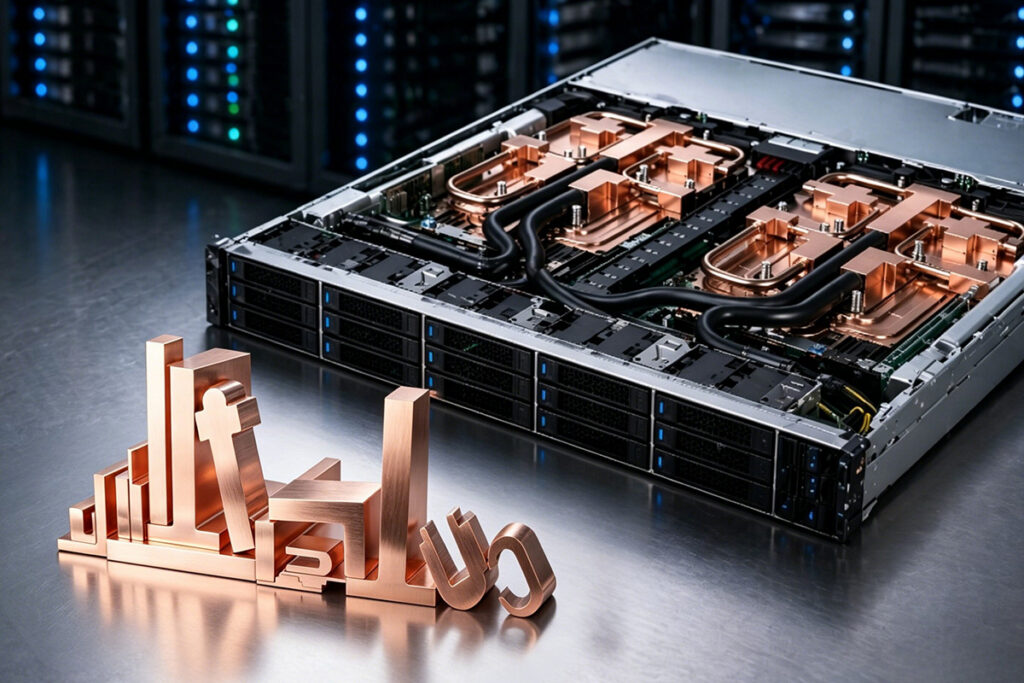

Majestic Labs has unveiled Prometheus, an AI server built around a “memory-first” architecture the company says is aimed at breaking the AI memory wall by attaching far more memory, and more memory bandwidth, to each processor than conventional designs.

Prometheus is configurable up to 128 TB of memory in a standard-size server, with Majestic describing the memory space as “uniform, shared and contiguous” and connected “at full bandwidth to all processing elements.” Majestic also claims Prometheus connects “1000x more high-speed, power-efficient memory at ultra-high bandwidth to each processor in the server,” and that one Prometheus system can deliver performance equivalent to multiple racks of standard servers in a single unit.

From a data center engineering perspective, the pitch is straightforward: if a workload is gated by memory capacity and memory bandwidth, collapsing it into fewer physical servers can change the footprint, the network design, and the power and cooling plan. But the “equivalent to multiple racks” and “unprecedented” efficiency claims are exactly the kind of numbers operators should validate with workload-specific benchmarks, power measurements, and deployment constraints before treating them as a rack-reduction plan.

Ignite AIUs and software stack

Inside Prometheus, Majestic uses proprietary AI Processing Units (AIUs) called Ignite. Majestic describes Ignite as a chip multiprocessor built around a memory-first architecture that combines datacenter-class ARM application cores with RISC-V vector and tensor cores on the same silicon and in the same memory space.

On the software side, Majestic says Prometheus is fully programmable and supports industry-standard frameworks including PyTorch, vLLM, and OpenAI’s Triton, with the goal of running existing code without changes.

“In the early days of AI, the industry ran workloads on machines that were never actually built for AI,” said Sha Rabii, co-founder and president of Majestic Labs. “The industry can no longer afford the compromise in efficiency that results from this ill-fitting pairing considering the scale that AI is reaching today.”

Majestic also says Prometheus is intended to run multi-trillion-parameter models in a single server and support “massive context windows of hundreds of millions of tokens,” along with mixture-of-experts models, agentic AI systems, graph neural networks, and tabular models.

Prometheus is in development for early customers, with wide availability expected next year. More information is available at majestic-labs.ai.

Source: Majestic Labs