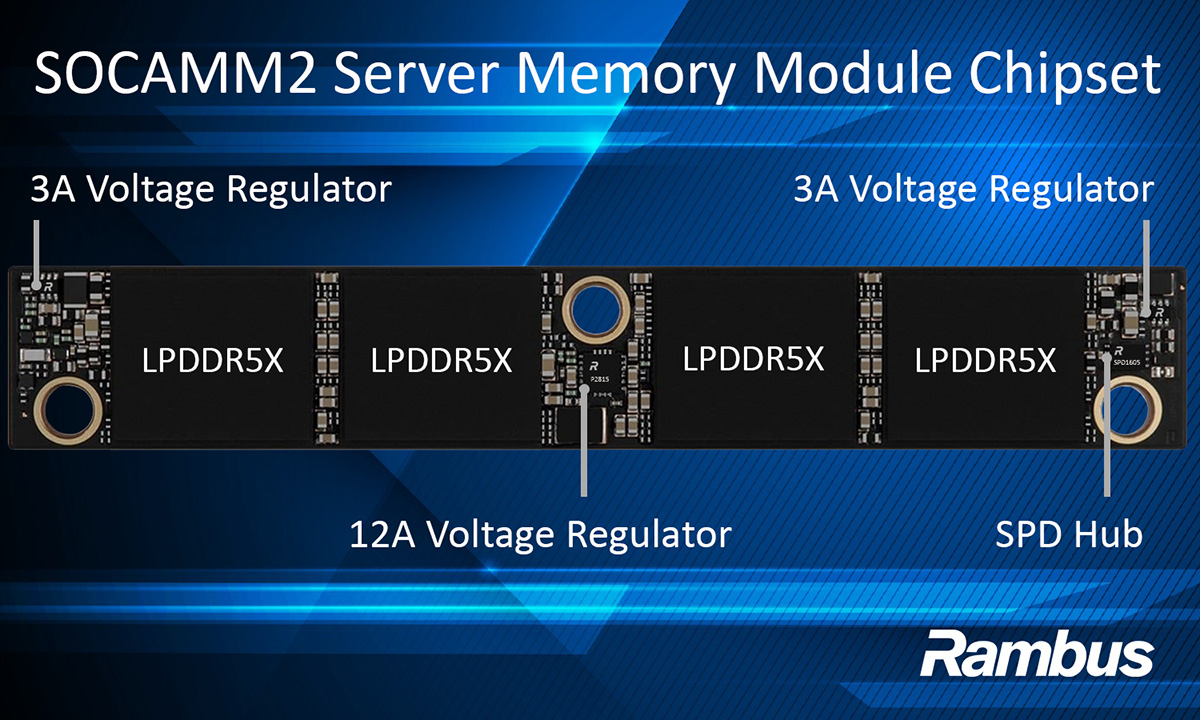

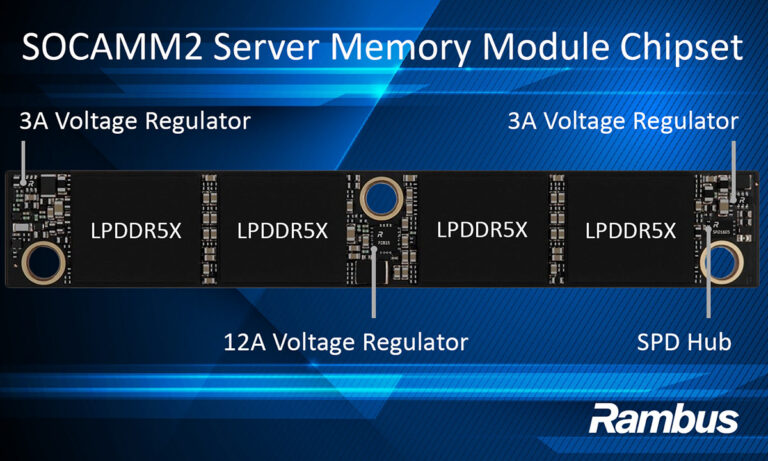

Rambus has introduced a SOCAMM2 server module chipset aimed at enabling low-power, high-performance LPDDR5X memory modules for AI server platforms. The company is targeting JEDEC-standard SOCAMM2 (Small Outline Compression Attached Memory Module) designs that replace soldered LPDDR with detachable, upgradable modules intended to bring more serviceability to LPDDR-class memory in the data center.

The Rambus LPDDR5X SOCAMM2 chipset supports SOCAMM2 memory modules running at up to 9.6 Gb/s. Rambus describes it as the first product in a planned family of LPDDR-based server module chipsets for future AI systems, and ties the effort to work with industry partners around emerging memory architectures for AI data center infrastructure.

At the component level, the chipset includes an SPD Hub for module identification, configuration, and telemetry, plus onboard voltage regulation specified as 12 A and 3 A voltage regulators for localized power conversion. In practical terms, that focuses the chipset on the “plumbing” that makes a modular LPDDR-based server memory approach viable in real platforms: control, telemetry, and local power delivery, not just raw signaling.

SOCAMM2’s pitch is straightforward: keep LPDDR’s efficiency characteristics, but move away from permanently soldered-down memory toward modules that can be installed, serviced, and upgraded. For AI operators, memory power and board area are hard constraints, and modularity changes how systems can be maintained over a lifecycle. But the engineering reality is that once you go modular at high speed, signal integrity, power integrity, and interoperability become the make-or-break details.

“AI system architectures are evolving rapidly, and memory has become one of the most critical enablers of performance, efficiency, and scalability,” said Rami Sethi, SVP and general manager of Memory Interface Chips at Rambus. “SOCAMM2 represents an important step in bringing modular, low-power, high-performance memory into next-generation AI servers.”

Micron also weighed in on the ecosystem angle. “With AI pushing the boundaries of compute performance and power budgets, the industry needs a robust ecosystem around LPDDR-class server memory,” said Praveen Vaidyanathan, vice president and general manager of Cloud Memory Products at Micron.

More details on the product are available on Rambus’ SOCAMM2 server module chipset page.

Source: Rambus