Cerebras Systems has signed a Memorandum of Understanding (MOU) with the US Department of Energy (DOE) to explore expanded collaboration on advanced artificial intelligence (AI) and high-performance computing (HPC) technologies. The agreement establishes a framework to accelerate AI-powered scientific discovery and strengthen computing infrastructure in support of the White House’s Genesis Mission, focused on transforming scientific research with AI.

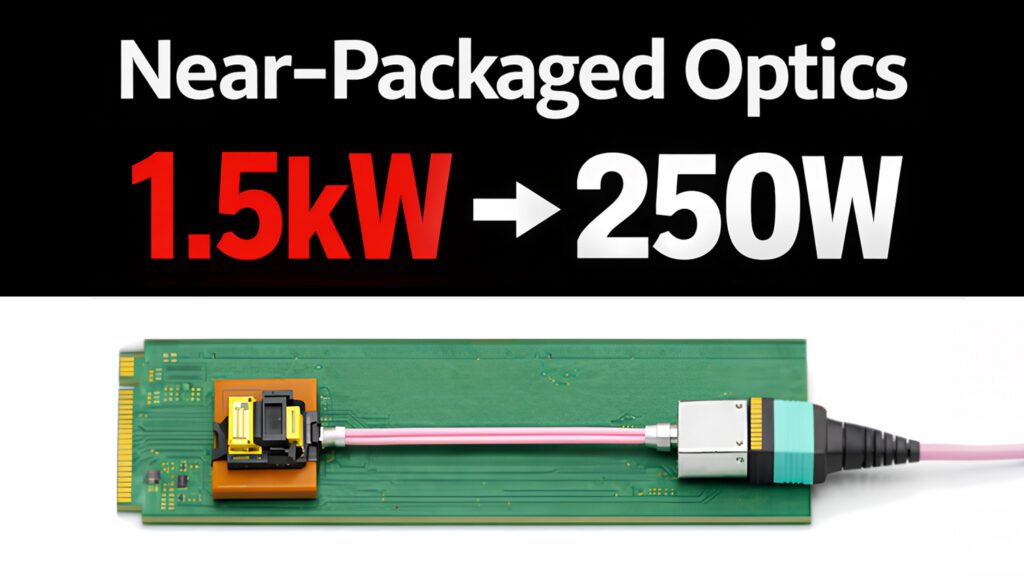

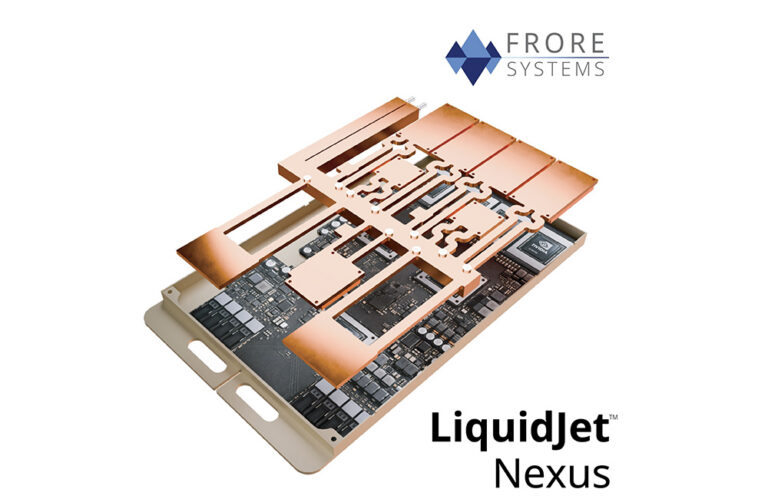

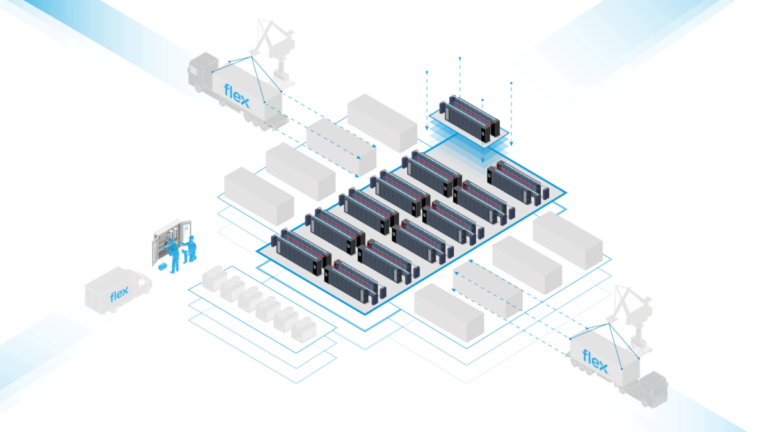

The MOU outlines joint efforts to develop large-scale scientific data sets, collaborate on new computing architectures, power, cooling, packaging, memory, and input/output (IO) technologies, and advance AI and HPC software. The partnership aims to support secure, scalable, and energy-efficient infrastructure for AI workloads. Cerebras and the DOE will explore pilot programs, joint R&D initiatives, technical exchanges, and future agreements to drive the development and deployment of wafer-scale AI supercomputers and converged AI-HPC workflows. Applications are expected in national laboratories and critical data center environments for science and security workloads.

Cerebras notes ongoing work with the DOE and its laboratories, including the deployment of Cerebras Wafer-Scale Engine (WSE) AI supercomputers for mission workloads. Capabilities demonstrated through this collaboration include AI model development for genomics and energy research, multiple Gordon Bell Prize finalist and winning projects, and performance improvements for tasks like molecular dynamics and computational fluid dynamics using DOE’s Sandia, Lawrence Livermore, and Los Alamos National Laboratories, as well as the National Energy Technology Laboratory (NETL). Cerebras highlights advances in hardware and memory co-design through the DOE’s Advanced Memory Technology program, enabling a reported 100-fold increase in wafer-scale system capacity for scientific simulations and large-scale AI workloads.

Andy Hock, Chief Strategy Officer at Cerebras, said, “For years, Cerebras has worked with the Department of Energy and its national laboratories on forward-leaning topics,” “We are proud to support the Genesis Mission and seek to work together with DOE and the National Laboratories to advance the next generation of AI-driven scientific discovery, accelerate R&D productivity, and strengthen America’s leadership in advanced computing.”

Cerebras’s Wafer Scale Engine 3 (WSE-3), cited as the world’s largest and fastest AI processor, has been deployed in research, government, and enterprise data center environments, where the company claims it delivers training and inference performance more than 20 times faster than conventional solutions, with improved power efficiency.

Source: Cerebras Systems