Rebellions has raised $400 million in a pre-IPO funding round and introduced two modular AI infrastructure offerings, RebelRack and RebelPOD, aimed at production-scale AI inference deployments in data centers.

The round was led by Mirae Asset Financial Group and the Korea National Growth Fund. Rebellions said the new financing follows a $250 million Series C in September 2025, bringing its total funding to $850 million and valuing the company at approximately $2.34 billion.

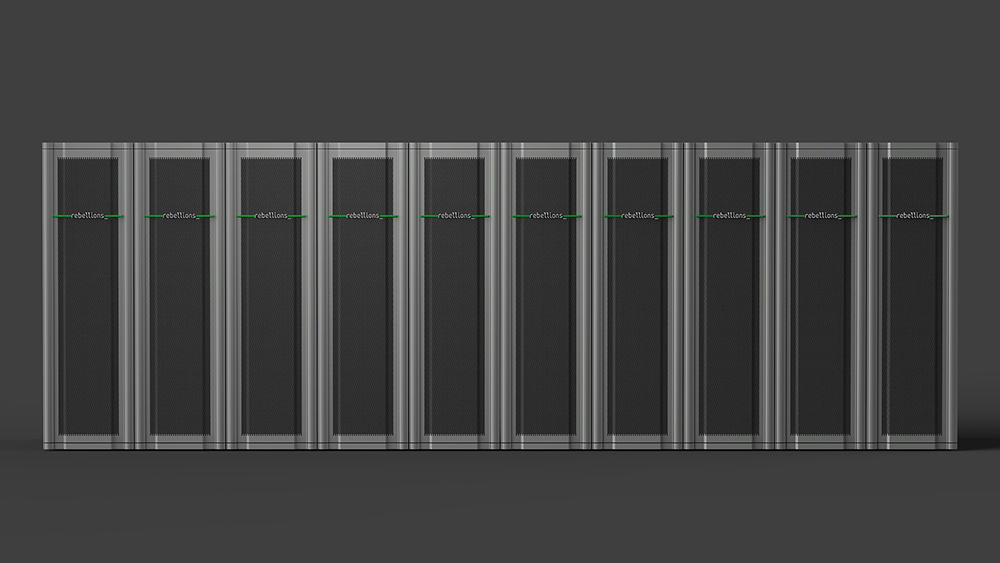

Rebellions describes RebelRack as a “production-ready unit of inference compute,” and RebelPOD as a system that integrates multiple racks into a scalable cluster for larger AI deployments. Both are described as available now, and both are built on Rebellions’ chiplet-based Rebel100 NPU platform.

For data center operators, the practical question isn’t branding, it’s integration: rack- and pod-level AI systems can simplify procurement and deployment, but only if power, cooling, and management interfaces line up with existing facility standards and day-two operations. Rebellions is emphasizing deployable systems alongside a software stack designed to run in production environments, which is where many AI projects hit friction.

Rebellions said it is focused on US expansion, scaled production of its Rebel100 platform, and preparation for a future IPO. The US push is being led by Chief Business Officer Marshall Choy, who recently joined the company to drive global growth.

On the software side, Rebellions said it has built a cloud-native AI stack and serving platform “underpinned by Kubernetes” and designed to work with open source software including vLLM, PyTorch, Triton, Hugging Face, and OpenShift. The company said the platform targets high-performance distributed inference, broad model support, and consistent deployment across environments.

“AI is now measured by its ability to operate in the real world—at scale, under power constraints, and with clear economic return,” said Sunghyun Park, co-founder and CEO of Rebellions. “That shifts the center of gravity toward inference infrastructure and software that makes that infrastructure usable.”

Source: Rebellions