ASUS used NVIDIA GTC 2026 to roll out a liquid-cooled AI infrastructure lineup built around the NVIDIA Vera Rubin platform, spanning rack-scale systems, enterprise deployments, and edge hardware. The core pitch is density and thermal headroom for large AI clusters, with ASUS centering the lineup on fully liquid-cooled rack-scale configurations for NVIDIA’s Rubin-generation platforms.

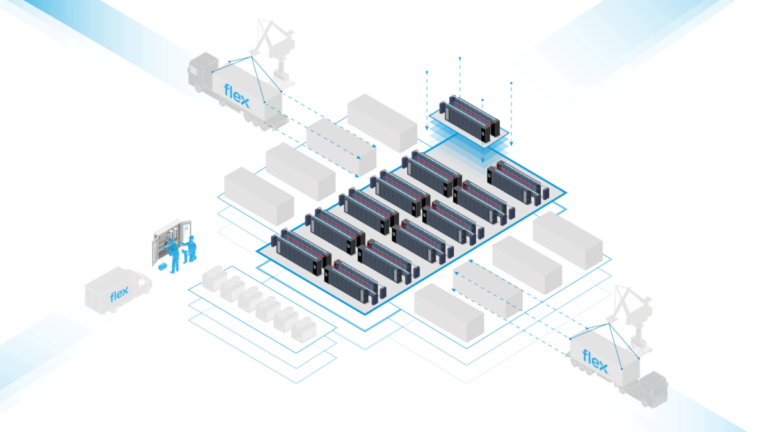

At the top end is the ASUS AI POD, a rack-scale, liquid-cooled system built on the NVIDIA Vera Rubin platform, with ASUS listing configurations as a provider of NVIDIA GB300 NVL72 and NVIDIA HGX B300 systems. ASUS also highlighted its XA VR721-E3, described as a 100% liquid-cooled rack-scale system built on NVIDIA Vera Rubin NVL72, with a TDP of up to 227 kW (MaxP) or 187 kW (MaxQ). ASUS claims up to 10x higher performance per watt for that system and positions it for trillion-parameter models.

For data center engineers, the practical detail here is the explicit rack-scale TDP numbers paired with a “100% liquid-cooled” design choice. A 187–227 kW class system is firmly in the territory where facility-side liquid cooling architecture, CDU capacity, and redundant pumping loops stop being optional design exercises and start driving the entire build, including maintenance planning and failure domains.

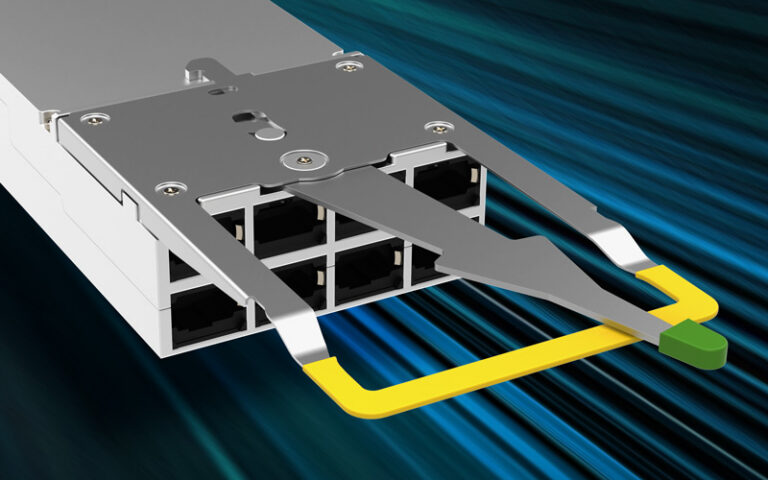

ASUS also introduced servers based on NVIDIA HGX Rubin NVL8, describing a platform with eight NVIDIA Rubin GPUs connected via sixth-generation NVIDIA NVLink and “integrated 800G bandwidth per GPU.” To support different cooling adoption paths, ASUS listed two variants: the XA NR1I-E12L as a hybrid-cooled system and the XA NR1I-E12LR as a 100% liquid-cooled system. ASUS said the hybrid XA NR1I-E12L uses direct-to-chip (D2C) liquid cooling for the NVIDIA HGX Rubin NVL8 baseboard, with air cooling for dual Intel Xeon 6 processors.

Other systems ASUS named include the XA NB3I-E12 (built on NVIDIA HGX B300), the ESC8000A-E13X (based on NVIDIA MGX and integrated with NVIDIA ConnectX-8 SuperNICs), and the ESC8000A-E13P (accelerated by NVIDIA RTX PRO 4500 Blackwell Server Edition or NVIDIA RTX PRO 6000 Blackwell Server Edition GPUs). ASUS also described an example deployment where an ASUS ESC8000 series system supported a production-line digital twin using NVIDIA Omniverse libraries and NVIDIA’s multi-camera tracking workflow.

On the edge and deskside side, ASUS listed the ExpertCenter Pro ET900N G3 (powered by the NVIDIA Grace Blackwell Ultra platform) with NVIDIA NVLink-C2C and 748 GB of coherent unified memory, plus the ultrasmall ASUS Ascent GX10 (powered by NVIDIA Grace Blackwell Superchip). For ruggedized inference, ASUS named the PE3000N powered by NVIDIA Jetson Thor, with ASUS citing 2,070 TFLOPS.

ASUS also introduced ASUS AI Hub as an on-premises enterprise AI platform optimized with ESC8000-series servers and supporting open-source LLMs including NVIDIA Nemotron and Gemma. Internally, ASUS said it has used the platform across more than 10,000 employees, with peak loads exceeding 600 requests per hour, >80% OCR accuracy, and >30% efficiency gains.

Availability is global for ASUS servers, while ASUS said availability of certain other products depends on local regulatory requirements.

Source: ASUS