Accton/Edgecore Networks, 1Finity (a Fujitsu company), and Liqid have announced a partnership to develop a photonic-based solution for data centers, integrating all-photonic network connectivity for long-distance, high-bandwidth, low-latency communication. The solution is engineered to sustain high transmission rates and zero throughput degradation for Remote Direct Memory Access (RDMA) and Non-Volatile Memory Express over Fabrics (NVMe-oF) protocols, according to the companies.

The joint platform targets hyperscale operators and colocation providers that require dynamic, wide-area resource sharing across geographically distributed environments. By combining software-defined composability with photonic switching, it enables data centers to rapidly scale infrastructure resources—including central processing units (CPUs), graphics processing units (GPUs), field-programmable gate arrays (FPGAs), storage, and memory—across multiple sites, supporting AI, machine learning, and data-intensive tasks.

Accton/Edgecore contributes a suite of open networking and optical infrastructure, including optical wavelength switches for improved utilization and programmable control utilizing dense wavelength division multiplexing (DWDM), as well as leaf and spine switches compliant with open networking standards for scalable data center fabrics. These are complemented by management switches for orchestration across network layers.

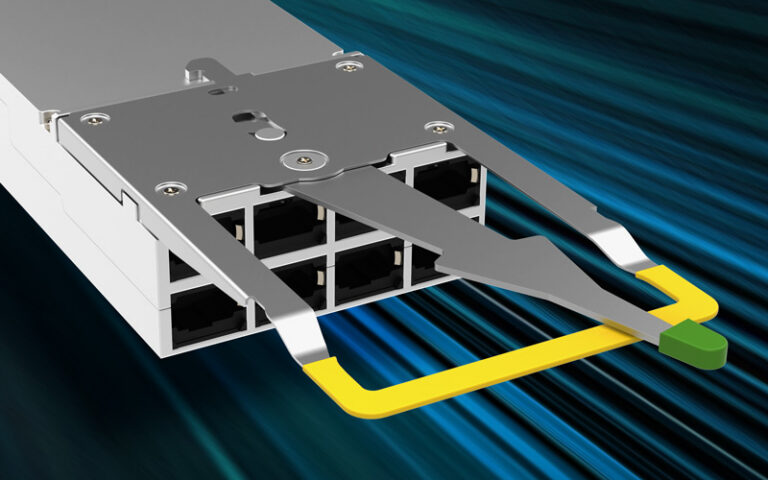

1Finity adds its optical networking platform, featuring a network interface card for energy-efficient, programmable optical transport, and the Ultra Optical System P300 series of 800 gigabit ZR/ZR+ coherent pluggable transceivers, targeting edge, metro, and long-haul deployments.

Liqid supplies its PCI Express Gen5-based composable infrastructure and Matrix software, allowing real-time reconfiguration and allocation of GPUs, FPGAs, storage, and compute resources. The company says this flexibility maximizes data center performance and efficiency, particularly for AI, ML, and high-performance computing workloads.

The solution will be demonstrated jointly at the SC 25 Summit in St. Louis, Missouri, from November 16 to 21, 2025, and is slated for deployment in the fourth quarter of 2025.

“As organizations scale AI from racks to global clusters, they need open networking that is powerful, programmable, and ready for anything,” said Mingshou Liu, President of Edgecore. “Edgecore delivers the switching fabric that moves data at the speed of AI innovation, and in partnership with Liqid and 1Finity, we’re unlocking a new era of collaborative, multi-datacenter AI infrastructure.”

“The future of AI depends on networks that are as dynamic as the compute they connect,” said Matsui Hideki, Senior Vice President and Head of Photonics System Business Unit at 1Finity. “With our modular optical platforms, we’re enabling low-latency, high-capacity interconnects that stretch Liqid’s composable resources across campuses and between datacenters. Together, we’re laying the optical foundation for tomorrow’s distributed AI clusters.”

“AI infrastructure must adapt as quickly as the workloads it supports,” stated Edgar Masri, CEO of Liqid. “By bringing software-defined composability to GPUs, memory, and storage, Liqid ensures that resources can be provisioned instantly, whether within a single rack or across multiple datacenters. This collaboration with 1Finity and Accton/Edgecore allows enterprises to achieve agility and scale that static infrastructure can never deliver.”

Source: Accton/Edgecore Networks