Penguin Solutions has announced what it calls the industry’s first “production-ready” CXL-based KV cache server, the Penguin Solutions MemoryAI KV cache server, aimed at reducing inference bottlenecks tied to memory capacity and latency. The company positions the system as a memory appliance for enterprise-scale AI inference workloads, including agentic AI, where KV cache capacity can constrain time-to-first-token (TTFT), throughput, and SLA performance.

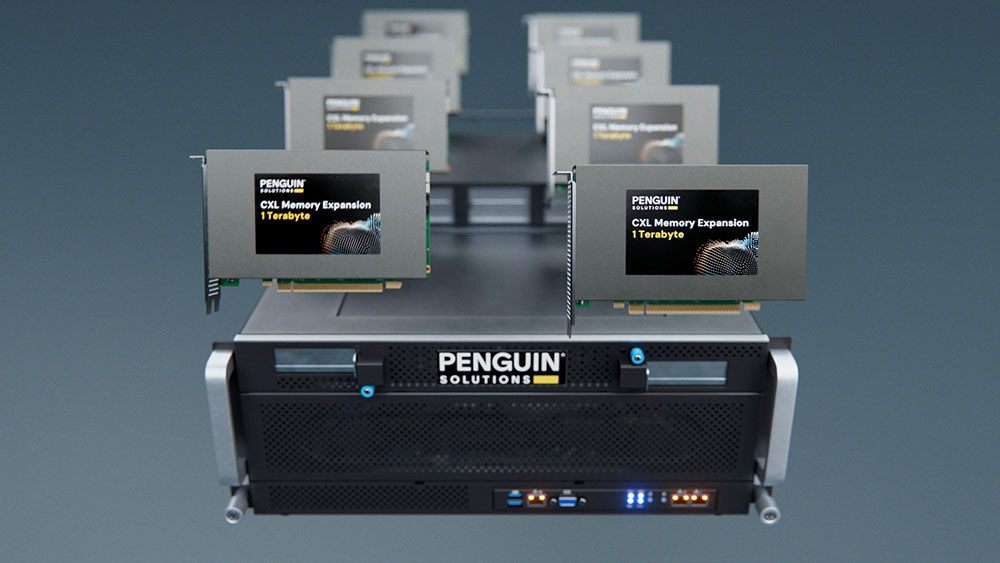

Penguin Solutions says the MemoryAI KV cache server delivers up to 11 TB of CXL-based memory. The configuration described includes 3 TB of DDR5 main memory plus support for up to eight 1 TB CXL Add-in Cards (AICs). Penguin Solutions ties the design to improving inference performance metrics it lists as lower latency, higher throughput, improved GPU cluster efficiency, and faster TTFT.

The PR frames the product around the “memory wall” problem during AI inferencing, contrasting inference with training and tuning. Penguin Solutions claims inference and agentic AI workloads are “continuous,” “memory-bound,” and “latency-sensitive,” and it states a typical inference demand split of 30% compute-driven (GPU) and 70% memory-driven (RAM). In that model, the company argues, insufficient memory capacity can create performance bottlenecks and drive GPU idle time.

On cluster design, Penguin Solutions highlights several operational angles tied to disaggregated memory via CXL. It says the server can support larger context sizes and higher concurrency for enterprise tasks that need large context windows and minimal latency, including real-time financial news parsing, retrieval-augmented generation (RAG) over 10-K datasets, and regulatory compliance analysis. It also claims the CXL-based KV cache creates “a new tier of cluster memory” that supplements HBM and system DRAM, and it asserts “speeds 10x faster than NVMe-based approaches” for KV data offload and access.

The company also states the MemoryAI KV cache server is compatible with NVIDIA Dynamo, which it describes as NVIDIA’s software architecture for KV cache memory offloading. On efficiency, Penguin Solutions says the server helps maximize GPU utilization by adding large memory pools and allows clusters to be optimized by right-sizing GPUs and memory; it also claims the system “draws less power than equivalent GPU servers,” without providing power figures in the announcement.

“CXL-enabled KV cache technology delivers faster time-to-first-token, reduced time per output token, and increased overall end-to-end token throughput,” said Phil Pokorny, chief technology officer at Penguin Solutions. “These critical performance improvements enable enterprise-scale inferencing across many users who expect low latency and timely access to AI-generated insights.”

Penguin Solutions also says customers are already deploying the solution to optimize cluster performance and meet latency SLAs for production AI workloads. The company directs readers to its MemoryAI KV cache server page and says it will be showing the system at booth #1031 during the NVIDIA GTC AI Conference and Expo, March 16–19, 2026, in San Jose, California.

Source: Penguin Solutions