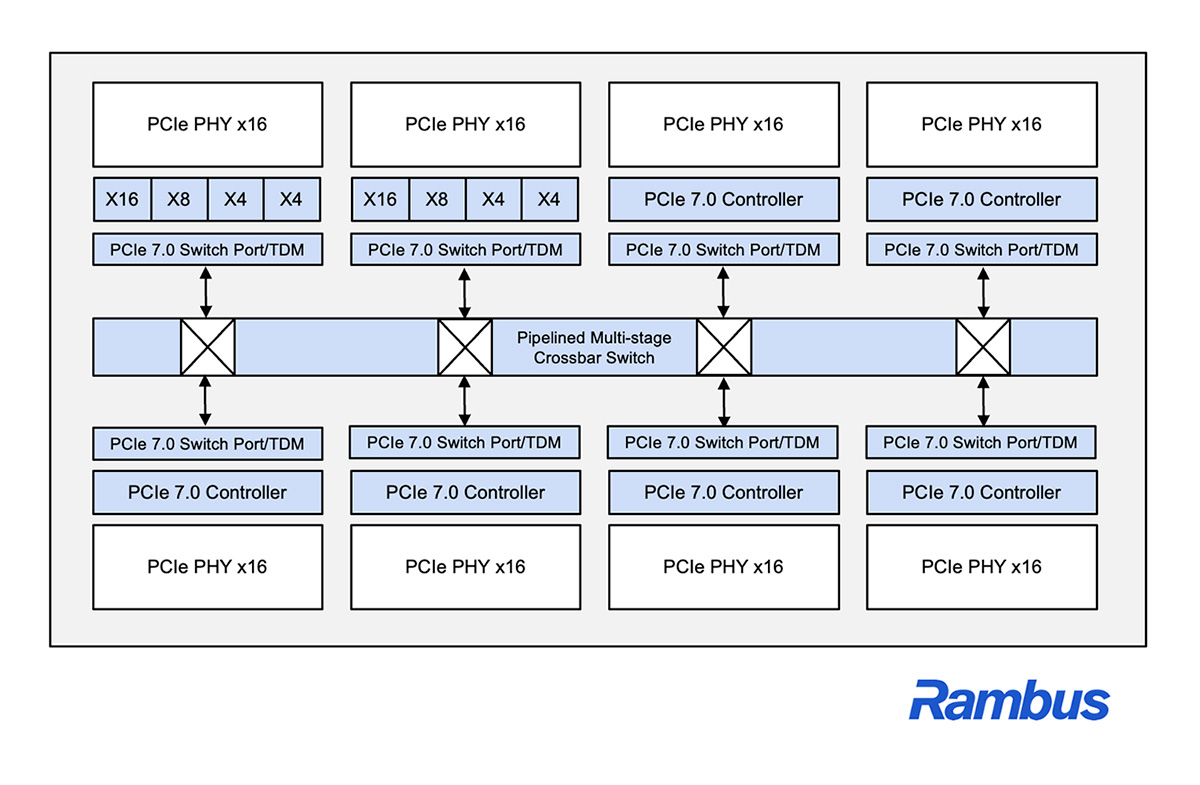

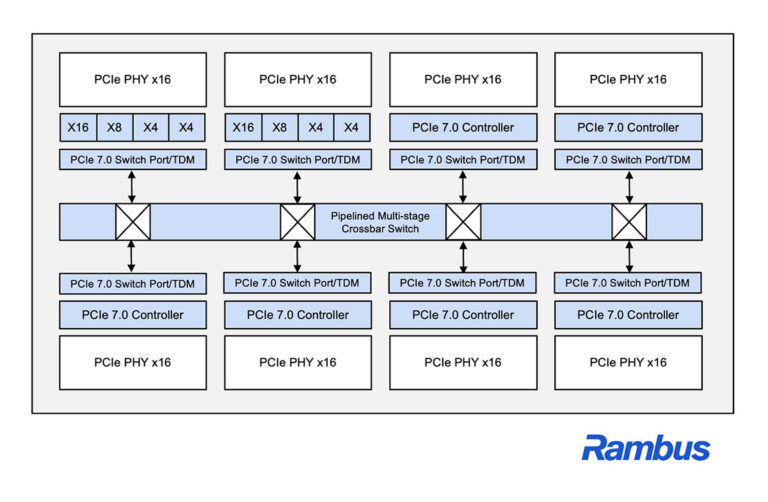

Rambus has introduced PCIe 7.0 Switch IP with Time Division Multiplexing (TDM), targeting AI, cloud, and high-performance computing (HPC) platforms that need to move large volumes of data across CPUs, GPUs, accelerators, and NVMe storage while scaling out fabric connectivity.

The Rambus PCIe 7.0 Switch IP adds TDM-based scheduling and multiplexing so multiple traffic flows can share PCIe links more efficiently. The idea is to increase link utilization through intelligent traffic multiplexing, which can simplify PCIe fabric architectures while supporting bandwidth scaling, low latency, and deterministic performance across distributed AI clusters and HPC networks.

For data center engineers and infrastructure architects, this is a reminder that PCIe fabric design is becoming a first-order system constraint as clusters grow and as more architectures lean on disaggregated or pooled resources. Better utilization of existing links can be just as important as adding lanes, especially when the design goal is to scale endpoints without turning the PCIe topology into a complexity and validation problem.

Rambus says the switch IP is optimized for next-generation AI and data center SoCs that require high bandwidth density, advanced traffic management, and scalability, with workload coverage ranging from large-scale AI training to latency-sensitive inference and general data movement. The company also notes that the PCIe 7.0 Switch IP with TDM is designed to integrate into leading-edge ASIC platforms and complements its broader PCIe 7.0 IP portfolio, including controllers, retimers, and debug solutions.

“It’s no longer sufficient to simply add more lanes or more endpoints,” said Simon Blake-Wilson, senior vice president and general manager of Silicon IP at Rambus. “With our PCIe 7.0 Switch IP with TDM, Rambus is giving system architects a new degree of freedom to scale bandwidth efficiently and deterministically, while reducing complexity and improving overall system utilization.”

More details are available on the Rambus PCI Express interface IP page.

Source: Rambus